Upgrade Your Drupal Skills

We trained 1,000+ Drupal Developers over the last decade.

See Advanced Courses NAH, I know EnoughCoincidence?

We're ready to celebrate and build (even more) amazing Drupal 8 websites.

On November 19 we'll put our Drupal 8 websites in the spotlight...be sure to come back and check out our website.

By

Michèle WeiszShare

Want to know more?

Contact us todayor call us +32 (0)3 298 69 98

© 2015 Wunderkraut Benelux

Drupalcon 2015

People from across the globe who use, develop, design and support the Drupal platform will be brought together during a full week dedicated to networking, Drupal 8 and sharing and growing Drupal skills.

As we have active hiring plans we’ve decided that this year’s approach should have a focus on meeting people who might want to work for Wunderkraut and getting Drupal 8 out into the world.

As Signature Supporting Partner we wanted as much people as possible to attend the event. We managed to get 77 Wunderkrauts on the plane to Barcelona! From Belgium alone we have an attendance of 17 people.

The majority of our developers will be participating in sprints (a get-together for focused development work on a Drupal project) giving all they got together with all other contributors at DrupalCon.

We look forward to an active DrupalCon week.

If you're at DrupalCon and feel like talking to us. Just look for the folks with Wunderkraut carrot t-shirts or give Jo a call at his cell phone +32 476 945 176.

Share

Related Blog Posts

Want to know more?

Contact us todayor call us +32 (0)3 298 69 98

© 2015 Wunderkraut Benelux

How Wunderkraut feels about Drupal 8

Drupal 8 is coming and everyone is sprinting hard to get it over the finish line. To boost contributor morale we’ve made a motivational Drupal 8 video that will get them into the zone and tackling those last critical issues in no time.

[embedded content]

Share

Related Blog Posts

Want to know more?

Contact us todayor call us +32 (0)3 298 69 98

© 2015 Wunderkraut Benelux

Once again Heritage day was a huge succes.

About 400 000 visitors visited Flanders monuments and heritage sites last Sunday. The Open Monumentendag website received more than double the amount of last year's visitors.

Visitors to the website organised their day out by using the powerful search tool we built that allowed them to search for activities and sights at their desired location. Not only could they search by location (province, zip code, city name, km range) but also by activity type, keywords, category and accessibility. Each search request being added as a (removable) filter for finding the perfect activity.

By clicking on the heart icon, next to each activity, a favorite list was drawn up. Ready for printing and taking along as route map.

Our support team monitored the website making sure visitors had a great digital experience for a good start to the day's activities.

Did you experience the ease of use of the Open Monumentendag website? Are you curious about the know-how we applied for this project? Read our Open Monumentendag case.

Breaking ground as Drupal's first Signature Supporting Partner

Drupal Association Executive Director Holly Ross is thrilled that Wunderkraut is joining as first and says: "Their support for the Association and the project is, and has always been, top-notch. This is another great expression of how much Wunderkraut believes in the incredible work our community does."

As Drupal Signature Supporting Partner we commit ourselves to advancing the Drupal project and empowering the Drupal community. We're very proud to be a part of it as we enjoy contributing to the Drupal ecosystem (especially when we can be quircky and fun as CEO Vesa Palmu states).

Our contribution allowed the Drupal Association to:

- Complete Drupal.org's D7 upgrade - now they can enhance new features

- Hired a full engineering team committed to improving Drupal.org infrastructure

- Set the roadmap for Drupal.org success.

By

Michèle WeiszShare

Related Blog Posts

Want to know more?

Contact us todayor call us +32 (0)3 298 69 98

© 2015 Wunderkraut Benelux

But in this post I'd like to talk about one of the disadvantages that here at Wunderkraut we pay close attention to.

A consequence of the ability to build features in more than one way is that it's difficult to predict how different people interact (or want to interact) with them. As a result, companies end up delivering solutions to their clients that although seem perfect, turn out, in time, to be less than ideal and sometimes outright counterproductive.

Great communication with the client and interest in their problems goes a long way towards minimising this effect. But sometimes clients realise that certain implementations are not perfect and could be made better. And when that happens, we are there to listen, adapt and reshape future solutions by taking into account these experiences.

One such recent example involved the use of a certain WYSIWYG library from our toolkit on a client website. Content editors were initially happy with the implementation before they actually started using it to the full extent. Problems began to emerge, leading to editors spending way more time than they should have performing editing tasks. The client signalled this problem to us which we then proceed to correct by replacing said library. This resulted in our client becoming happier with the solution, much more productive and less frustrated with their experience on their site.

We learned an important lesson in this process and we started using that new library on other sites as well. Polling our other clients on the performance of the new library revealed that indeed it was a good change to make.

A few years ago most of the requests started with : "Dear Wunderkraut, we want to build a new website and ... " - nowadays we are addressed as "Dear Wunderkraut, we have x websites in Drupal and are very happy with that, but we are now looking for a reliable partner to support & host ... ".

By the year 2011 Drupal had been around for just about 10 years. It was growing and changing at a fast pace. More and more websites were being built with it. Increasing numbers of people were requesting help and support with their website. And though there were a number of companies flourishing in Drupal business, few considered specific Drupal support an interesting market segment. Throughout 2011 Wunderkraut Benelux (formerly known as Krimson) was tinkering with the idea of offering support, but it was only when Drupal newbie Jurgen Verhasselt arrived at the company in 2012 that the idea really took shape.

Before his arrival, six different people, all with different profiles, were handling customer support in a weekly rotation system. This worked poorly. A developer trying to get his own job done plus deal with a customer issue at the same time was getting neither job done properly. Tickets got lost or forgotten, customers felt frustrated and problems were not always fixed. We knew we could do better. The job required uninterrupted dedication and constant follow-up.

That’s where Jurgen came in the picture. After years of day job experience in the graphic sector and nights spent on Drupal he came to work at Wunderkraut and seized the opportunity to dedicate himself entirely to Drupal support. Within a couple of weeks his coworkers had handed over all their cases. They were relieved, he was excited! And most importantly, our customers were being assisted on a constant and reliable basis.

By the end of 2012 the first important change was brought about, i.e. to have Jurgen work closely with colleague Stijn Vanden Brande, our Sys Admin. This team of two ensured that many of the problems that arose could be solved extremely efficiently. Wunderkraut being the hosting party as well as the Drupal party means that no needless discussions with the hosting took place and moreover, the hosting environment was well-known. This meant we could find solutions with little loss of time, as we know that time is an important factor when a customer is under pressure to deliver.

In the course of 2013 our support system went from a well-meaning but improvised attempt to help customers in need to a fully qualified division within our company. What changed? We decided to classify customer support issues into: questions, incidents/problems and change requests and incorporated ITIL based best practices. In this way we created a dedicated Service Desk which acts as a Single Point of Contact after Warranty. This enabled us to offer clearly differing support models based on the diverse needs of our customers (more details about this here). In addition, we adopted customer support software and industry standard monitoring tools. We’ve been improving ever since, thanks to the large amount of input we receive from our trusted customers. Since 2013, Danny and Tim have joined our superb support squad and we’re looking to grow more in the months to come.

When customers call us for support we do quite a bit more than just fix the problem at hand. Foremostly, we listen carefully and double check everything to ensure that we understand him or her correctly. This helps to take the edge off the huge pressure our customer may be experiencing. After which, we have a list of do’s and don’t for valuable support.

- Do a quick scan of possible causes by getting a clear understanding of the symptoms

- Do look for the cause of course, but also assess possible quick-fixes and workarounds to give yourself time to solve the underlying issue

- Do check if it’s a pebkac

- and finally, do test everything within the realm of reason.

The most basic don’t that we swear by is:

- never, ever apply changes to the foundation of a project.

- Support never covers a problem that takes more than two days to fix. At that point we escalate to development.

We are so dedicated to offering superior support to customers that on explicit request, we cater to our customers’ customers. Needless to say, our commitment in support has yielded remarkable results and plenty of customer satisfaction (which makes us happy, too)

If your website is running Drupal 6, chances are it’s between 3 and 6 years old now, and once Drupal 8 comes out. Support for Drupal 6 will drop. Luckily the support window has recently been prolonged for another 3 months after Drupal 8 comes out. But still, that leaves you only a small window of time to migrate to the latest and greatest. But why would you?

There are many great things about Drupal 8 that will have something for everyone to love, but that should not be the only reason why you would need an upgrade. It is not the tool itself that will magically improve the traffic to your site, neither convert its users to start buying more stuff, it’s how you use the tool.

So if your site is running Drupal 6 and hasn’t had large improvements in the last years it might be time to investigate if it needs a major overhaul to be up to par with the competition. If that’s the case, think about brand, concept, design, UX and all of that first to understand how your site should work and what it should look like, only then we can understand if a choice needs to be made to go for Drupal 7 or Drupal 8.

If your site is still running well you might not even need to upgrade! Although community support for Drupal 6 will end a few months after Drupal 8 release, we will continue to support Drupal 6 sites and work with you to fix any security issues we encounter and collaborate with the Drupal Security Team to provide patches.

My rule of thumb is that if your site uses only core Drupal and a small set of contributed modules, it’s ok to build a new website on Drupal 8 once it comes out. But if you have a complex website running on many contributed and custom modules it might be better to wait a few months maybe a year until all becomes stable.

So how does customer journey mapping work?

In this somewhat simplified example, we map the customer journey of somebody signing up for an online course. If you want to follow along with your own use case, pick an important target audience and a customer journey that you know is problematic for the customer.

1. Plot the customer steps in the journey

Write down the series of steps a client takes to complete this journey. For example “requests brochure”, “receives brochure”, “visits the website for more information”, etc. Put each step on a coloured sticky note.

2. Define the interactions with your organisation

Next, for each step, determine which people and groups the customer interacts with, like the marketing department, copywriter and designer, customer service agent, etc. Do the same for all objects and systems that the client encounters, like the brochure, website and email messages. You’ve now mapped out all people, groups, systems and objects that the customer interacts with during this particular journey.

3. Draw the line

Draw a line under the sticky notes. Everything above the line is “on stage”, visible to your customers.

4. Map what happens behind the curtains

Now we’ll plot the backstage parts. Use sticky notes of a different color and collect the persons, groups, actions, objects and systems that support the on stage part of the journey. In this example these would be the marketing team that produces the prod brochure, the printer, the mail delivery partner, web site content team, IT departments, etc. This backstage part is usually more complex than the on stage part.

5. How do people feel about this?

Now we get to the crucial part. Mark the parts that work well from the perspective of the person interacting with it with green dots. Mark the parts where people start to feel unhappy with yellow dots. Mark the parts where people get really frustrated with red. What you’ll probably see now is that your client starts to feel unhappy much sooner than employees or partners. It could well be that on the inside people are perfectly happy with how things work while the customer gets frustrated.

What does this give you?

Through this process you can immediately start discovering and solving customer experience issues because you now have:

- A user centred perspective on your entire service/product offering

- A good view on opportunities for innovation and improvement

- Clarity about which parts of the organisation can be made responsible to produce those improvements

- In a shareable format that is easy to understand

Mapping your customer journey is an important first step towards customer centred thinking and acting. The challenge is learning to see things from your customers perspective and that's exactly what a customer journey map enables you to do. Based on the opportunities you identified from the customer journey map, you’ll want to start integrating the multitude of digital channels, tools and technology already in use into a cohesive platform. In short: A platform for digital experience management! That's our topic for our next post.

In combination with the FacetAPI module, which allows you to easily configure a block or a pane with facet links, we created a page displaying search results containing contact type content and a facets block on the left hand side to narrow down those results.

One of the struggles with FacetAPI are the URLs of the individual facets. While Drupal turns the ugly GET 'q' parameter into a clean URLs, FacetAPI just concatenates any extra query parameters which leads to Real Ugly Paths. The FacetAPI Pretty Paths module tries to change that by rewriting those into human friendly URLs.

Our challenge involved altering the paths generated by the facets, but with a slight twist.

Due to the projects architecture, we were forced to replace the full view mode of a node of the bundle type "contact" with a single search result based on the nid of the visited node. This was a cheap way to avoid duplicating functionality and wasting precious time. We used the CTools custom page manager to take over the node/% page and added a variant which is triggered by a selection rule based on the bundle type. The variant itself doesn't use the panels renderer but redirects the visitor to the Solr page passing the nid as an extra argument with the URL. This resulted in a path like this: /contacts?contact=1234.

With this snippet, the contact query parameter is passed to Solr which yields the exact result we need.

/**

* Implements hook_apachesolr_query_alter().

*/

function myproject_apachesolr_query_alter($query) {

if (!empty($_GET['contact'])) {

$query->addFilter('entity_id', $_GET['contact']);

}

}

The result page with our single search result still contains facets in a sidebar. Moreover, the URLs of those facets looked like this: /contacts?contact=1234&f[0]=im_field_myfield..... Now we faced a new problem. The ?contact=1234 part was conflicting with the rest of the search query. This resulted in an empty result page, whenever our single search result, node 1234, didn't match with the rest of the search query! So, we had to alter the paths of the individual facets, to make them look like this: /contacts?f[0]=im_field_myfield.

This is how I approached the problem.

If you look carefully in the API documentation, you won't find any hooks that allow you to directly alter the URLs of the facets. Gutting the FacetAPI module is quite daunting. I started looking for undocumented hooks, but quickly abandoned that approach. Then, I realised that FacetAPI Pretty Paths actually does what we wanted: alter the paths of the facets to make them look, well, pretty! I just had to figure out how it worked and emulate its behaviour in our own module.

Turns out that most of the facet generating functionality is contained in a set of adaptable, loosely coupled, extensible classes registered as CTools plugin handlers. Great! This means that I just had to find the relevant class and override those methods with our custom logic while extending.

Facet URLs are generated by classes extending the abstract FacetapiUrlProcessor class. The FacetapiUrlProcessorStandard extends and implements the base class and already does all of the heavy lifting, so I decided to take it from there. I just had to create a new class, implement the right methods and register it as a plugin. In the folder of my custom module, I created a new folder plugins/facetapi containing a new file called url_processor_myproject.inc. This is my class:

/**

* @file

* A custom URL processor for cancer.

*/

/**

* Extension of FacetapiUrlProcessor.

*/

class FacetapiUrlProcessorMyProject extends FacetapiUrlProcessorStandard {

/**

* Overrides FacetapiUrlProcessorStandard::normalizeParams().

*

* Strips the "q" and "page" variables from the params array.

* Custom: Strips the 'contact' variable from the params array too

*/

public function normalizeParams(array $params, $filter_key = 'f') {

return drupal_get_query_parameters($params, array('q', 'page', 'contact'));

}

}

I registered my new URL Processor by implementing hook_facetapi_url_processors in the myproject.module file.

**

* Implements hook_facetapi_url_processors().

*/

function myproject_facetapi_url_processors() {

return array(

'myproject' => array(

'handler' => array(

'label' => t('MyProject'),

'class' => 'FacetapiUrlProcessorMyProject',

),

),

);

}

I also included the .inc file in the myproject.info file:

files[] = plugins/facetapi/url_processor_myproject.inc

Now I had a new registered URL Processor handler. But I still needed to hook it up with the correct Solr searcher on which the FacetAPI relies to generate facets. hook_facetapi_searcher_info_alter allows you to override the searcher definition and tell the searcher to use your new custom URL processor rather than the standard URL processor. This is the implementation in myproject.module:

/**

* Implements hook_facetapi_search_info().

*/

function myproject_facetapi_searcher_info_alter(array &$searcher_info) {

foreach ($searcher_info as &$info) {

$info['url processor'] = 'myproject';

}

}

After clearing the cache, the correct path was generated per facet. Great! Of course, the paths still don't look pretty and contain those way too visible and way too ugly query parameters. We could enable the FacetAPI Pretty Path module, but by implementing our own URL processor, FacetAPI Pretty Paths will cause a conflict since the searcher uses either one or the other class. Not both. One way to solve this problem would be to extend the FacetapiUrlProcessorPrettyPaths class, since it is derived from the same FacetapiUrlProcessorStandard base class, and override its normalizeParams() method.

But that's another story.

What is a views display extender

The display extender plugin allow to add additional options or configuration to a views regardless of the type of display (e.g. page, block, ..).

For example, if you wanted to allow site users to add certain metadata to the rendered output of every view display regardless of display type, you could provide this option as a display extender.

What we can do with it

We will see how we implement such a plugin, for the example, we will add some metadata (useless metatags as example) to the document head when the views is displayed.

We will call the display extender plugin HeadMetadata (id: head_metadata) and we will implement it in a module called views_head_metadata.

The implementation

Make our plugin discoverable

Views do not discover display extender plugins with a hook info as usual, for this particular type of plugin, views has a variable in his views.settings configuration object.

You need to add your plugin ID to the variable views.settings.display_extenders (that is a list).

To do so, I will recommend you to implement the hook_install (as well uninstall) in the module install file. To manipulate config object you can look at my previous notes on CMI.

Make the plugin class

As seen in the previous post on Drupal 8 plugins, you need to implement the class in the plugin type namespace, extend the base class for this type of plugin, and add the metadata annotation.

In the case of the display extender plugin, the namespace is Drupal\views_head_metadata\Plugin\views\display_extender, the base class is DisplayExtenderPluginBase, and the metadata annotation are defined in \Drupal\views\Annotation\ViewsDisplayExtender.

The display extender plugins methods are nearly the same that the display plugins, you can think of its like a set of methods to alter the display plugin.

The important methods to understand are :

- defineOptionsAlter(&$options) : Define an array of options your plugins will define and save. Sort of schema of your plugin.

- optionsSummary(&$categories, &$options) : To add a category (the section of the views admin interface) if you want to add one, and define your options settings (in wich category there are, and the value to display as the summary).

- buildOptionsForm(&$form, FormStateInterface $form_state) : Where you construct the form(s) for your plugin, of course linked with a validate and submit method.

Generate the metadata tags in the document head

Now that we have our settings added to every views display, we need to use those to generate the tags in the document head as promised.

To work on the views render we will use the hook for that : hook_views_pre_render($view) and the render array property #attached.

Implement that hook in the .module of our module views_head_metadata, let's see :

For the example we are going to implement an area that will present some links and text in a custom way, not sure if it's really usefull, but that not the point of this article.

The Plugin system

For the first post on the plugins I will introduce briefly on the concept. For those that already been using Ctools plugins system, you already now about the plugin system purposes.

For those who doesn't know about it, the plugin system is a way to let other module implements her own use case for an existing features, think of Field formatter : provide your own render array for a particular field display, or Widget : provide your own form element for a particular field type, etc...

The plugin system has three base elements :

Plugin Types

The plugin type is the central controlling class that defines how the plugins of this type will be discovered and instantiated. The type will describe the central purpose of all plugins of that type; e.g. cache backends, image actions, blocks, etc.

Plugin Discovery

Plugin Discovery is the process of finding plugins within the available code base that qualify for use within this particular plugin type's use case.

Plugin Factory

The Factory is responsible for instantiating the specific plugin(s) chosen for a given use case.

Detailled informations : https://www.drupal.org/node/1637730

In our case Views is responsible of that implementations so we are not going further on that, let see now how to implement a plugin definition.

The Plugin definitions

The existing documentation on the plugin definitions are a little abstract for now to understand how it really works (https://www.drupal.org/node/1653532).

You have to understand simply that a Plugin in most case is a Class implementation, namespaced within the namespace of the plugin type, in our example this is : \Drupal\module_name\Plugin\views\area

So if I implement a custom views area Plugin in my module the class will be located under the location module_name/src/Plugin/views/area/MyAreaHandler.php

To know where to implement a plugin definition for a plugin type, you can in most case look at module docs, or directly in the source code of the module (looking at an example of a definition will be enough)

In most cases, the modules that implement a Plugin type will provide a base class for the plugins definitions, in our example views area provide a base class : \Drupal\views\Plugin\views\area\AreaPluginBase

Drupal provide also a base class, if you implement a custom Plugin type, for the Plugin definition : \Drupal\Component\Plugin\PluginBase

Your custom plugin definition class must also have annotation metadata, that is defined by the module that implement the plugin type, in our example : \Drupal\views\Annotation\ViewsArea

In the case of views you will also need to implement the hook_views_data() into module_name.views.inc file, there you will inform views about the name and metadata of your Area handler.

Hands on implementation

So we have a custom module let's call it module_name for the example :)

We will create the class that implements our plugin definition and we are gonna give it this Plugin ID : my_custom_site_area.

We save this file into module_name/src/Plugin/views/area/MyCustomSiteArea.php

Now we just have to implements the hook_views_data() and yes this is the end, you can use your awesome views area handler into any view and any area.

Define this hook into the file : module_name/module_name.views.inc

There is three types of configuration data :

The Simple Configuration API

-

Used to store unique configuration object.

-

Are namespaced by the module_name.

-

Can contain a list of structured variables (string, int, array, ..)

-

Default values can be found in Yaml : config/install/module_name.config_object_name.yml

-

Have a schema defined in config/schema/module_name.schema.yml

Code example :

The States

-

Not exportable, simple value that hardly depend of the environment.

-

Value can differ between environment (e.g. last_cron, maintenance_mode have different value on your local and on the production site)

The Entity Configuration API

-

Configuration object that can be multiple (e.g. views, image style, ckeditor profile, ...).

-

New Configuration type can be defined in custom module.

-

Have a defined schema in Yaml.

-

Not fieldable.

-

Values can be exported and stored as Yaml, can be stored by modules in config/install

Code example :

https://www.drupal.org/node/1809494

Store configuration object in the module :

Config object (not states) can be stored in a module and imported during the install process of the modules.

To export a config object in a module you can use the configuration synchronisation UI at /admin/config/development/configuration/single/export

Select the configuration object type, then the object, copy the content and store it in your custom module config/install directory following the name convention that is provided below the textarea.

You can also use the features module that is now a simple configuration packager.

If after the install of the module, you want to update the config object, you can use the following drush command :

Configuration override system

Remember the variable $conf in settings.php in D6/D7 for overriding variables.

In D8, you can also override variable from the configuration API:

You can also do overrides at runtime.

Example: getting a value in a specific languages :

Drupal provide a storage for override an module can specify her own way of override, for deeper informations look at :

https://www.drupal.org/node/1928898

Configuration schema

The config object of Config API and of the configuration entity API have attached schema defined in module_name/config/install/module_name.schema.yml

These schema are not mandatory, but if you want to have translatable strings, nor form configuration / consistent export, you must take the time to implement the schema for your configuration object. However if you don't want to, you can just implement the toArray() method in your entity config object class.

Example, docs and informations : https://www.drupal.org/node/1905070

Configuration dependencies calculation

Default is in the .info of the module that define the config object like in D6/D7

But config entity can implements calculateDependencies() method to provide dynamic dependencies depending on config entity values.

Think of Config entity that store field display information for content entities specific view modes, there a need to have the module that hold the fields / formatters in dependencies but these are dynamic depending on the content entity display.

More information : https://www.drupal.org/node/2235409

Ressources

Migrate in Drupal 8

Migrate is now included in the Drupal core for making the upgrade path from 6.x and 7.x versions to Drupal 8.

Drupal 8 has two new modules :

Migrate: « Handles migrations »

Migrate Drupal : « Contains migrations from older Drupal versions. »

None of these module have a User Interface.

« Migrate » contains the core framework classes, the destination, source and process plugins schemas and definitions, and at last the migration config entity schema and definition.

« Migrate Drupal » contains implementations of destination, sources and process plugins for Drupal 6 and 7 you can use it or extend it, it's ready to use. But this module doesn't contain the configuration to migrate all you datas from your older Drupal site to Drupal 8.

The core provides templates of migration configuration entity that are located under each module of the core that needs one, under a folder named 'migration_templates' to find all the templates you can use this command in your Drupal 8 site:

To make a Drupal core to core migration, you will find all the infos here : https://www.Drupal.org/node/2257723 there is an UI in progress for upgrading.

A migration framework

Let have a look at each big piece of the migration framework :

Source plugins

Drupal provides an interface and base classes for the migration source plugin :

- SqlBase : Base class for SQL source, you need to extend this class to use it in your migration.

- SourcePluginBase : Base class for every custom source plugin.

- MenuLink: For D6/D7 menu links.

- EmptySource (id:empty): Plugin source that returns an empty row.

- ...

Process plugins

There is the equivalent of the D7 MigrateFieldHandler but this is not reduced to fields or to a particular field type.

Its purpose is to transform a raw value into something acceptable by your new site schema.

The method transform() of the plugin is in charge of transforming your $value or skipping the entire row if needed.

If the source property has multiple values, the transform() will happen on each one.

Drupal provides migration process plugin into each module of the core that needs it (for the core upgrade),

To find out which one and where it is located you can use this command :

Destination plugins

Destination plugins are the classes that handle where your data are saved in the new Drupal 8 sites schemas.

Drupal provides a lot of useful destination classes :

- DestinationBase : Base class for migrate destination classes.

- Entity (id: entity) : Base class for entity destinations.

- Config (id: config) : Class for importing configuration entities.

- EntityBaseFieldOverride (id: entity:base_field_override): Class for importing base field.

- EntityConfigBase : Base class for importing configuration entities.

- EntityImageStyle (id: entity:image_style): Class for importing image_style.

- EntityContentBase (id: entity:%entity_type): The destination class for all content entities lacking a specific class.

- EntityNodeType: (id: entity:node_type): A class for migrate node type.

- EntityFile (id: entity:file): Class for migrate files.

- EntityFieldInstance: Class for migrate field instance.

- EntityFieldStorageConfig: Class for migrate field storage.

- EntityRevision, EntityViewMode, EntityUser, Book...

- And so more…

Builder plugins:

"Builder plugins implement custom logic to generate migration entities from migration templates. For example, a migration may need to be customized based on the data that is present in the source database; such customization is implemented by builders." - doc API

This is used in the user module, the builder create a migration configuration entity based on a migration template and then add fields mapping to the process, based on the data in the source database. (@see /Drupal/user/Plugin/migrate/builder/d7/User)

Id map plugins:

"It creates one map and one message table per migration entity to store the relevant information." - doc API

This is where rollback, update and the map creation are handled.

Drupal provides the Sql plugin (@see /Drupal/migrate/Plugin/migrate/id_map/Sql) based on the core base class PluginBase.

And we are talking only about core from the beginning.

All the examples (That means docs for devs) are in core !

About now :

While there *almost* a simple UI to use migration in Drupal 8 for Drupal to Drupal, Migrate can be used for every kind of data input. The work is in progess for http://Drupal.org/project/migrate_plus to bring an UI and more source plugins, process plugins and examples. There already is the CSV source plugin and a pending patch for the code example. The primary goal of « migrate plus » is to have all the features (UI, Sources, Destinations.. ) of the Drupal 7 version.

Concrete migration

(migration with Drupal 8 are made easy)

I need to migrate some content with image, attached files and categories from custom tables in an external SQL database to Drupal.

To begin shortly :

- Drush 8 (dev master) and console installed.

- Create the custom module (in the code, I assume the module name is “example_migrate”):

$ Drupal generate:module

or create the module by yourself, you only need the info.yml file. - Activate migrate and migrate_plus tools

$ Drupal module:install migrate_tools

or

$ drush en migrate_tools - What we have in Drupal for the code example :

- a taxonomy vocabulary : ‘example_content_category’

- a content type ‘article’

- some fields: body, field_image, field_attached_files, field_category

- Define in settings.php, the connexion to your external database:

We are going to tell migrate source to use this database target. It happens in each migration configuration file, it’s a configuration property used by the SqlBase source plugin:

This is one of the reasons SqlBase has a wrapper for select query and you need to call it in your source plugin, like $this->select(), instead of building the query with bare hands.

N.B. Each time you add a custom yml file in your custom module you need to uninstall/reinstall the module for the config/install files to imports. In order to avoid that, you can import a single migration config file by copy/paste in the admin/config configuration synchronisation section.

The File migration

The content has images and files to migrate, I suppose in this example that the source database has a unique id for each file in a specific table that hold the file path to migrate.

We need a migration for the file to a Drupal 8 file entity, we write the source plugin for the file migration:

File: src/Plugin/migrate/source/ExampleFile.php

We have the source class and our source fields and each row generate a path to the file on my local disk.

But we need to transform our external file path to a local Drupal public file system URI, for that we need a process plugin. In our case the process plugin will take the external filepath and filename as arguments and return the new Drupal URI.

File: src/Plugin/migrate/process/ExampleFileUri.php

We need another process plugin to transform our source date values to timestamp (created, changed), as the date format is the same across the source database, this plugin will be reused in the content migration for the same purpose:

File: src/Plugin/migrate/process/ExampleDate.php

For the destination we use the core plugin: entity:file.

Now we have to define our migration config entity file, this is where the source, destination and process (field mappings) are defined:

File: config/install/migrate.migration.example_file.yml

We are done for the file migration, you can execute it with the migrate_tools (of the migrate_plus project) drush command:

The Term migration

The content has categories to migrate.

We need to import them as taxonomy term, in this example I suppose the categories didn't have unique ids, it is just a column of the article table with the category name…

First we create the source :

File: src/Plugin/migrate/source/ExampleCategory.php

And we can now create the migration config entity file :

File: config/install/migrate.migration.example_category.yml

This is done, to execute it :

The Content migration

The content from the source has an html content, raw excerpt, image, attached files, categories and the creation/updated date in the format Y-m-d H:i:s

We create the source plugin:

File: src/Plugin/migrate/source/ExampleContent.php

Now we can create the content migration config entity file :

File: config/install/migrate.migration.example_content.yml

Finally, execute it :

Group the migration

Thanks to migrate_plus, you can specify a migration group for your migration.

You need a to create a config entity for that :

File: config/install/migrate_plus.migration_group.example.yml

Then in your migration config yaml file, be sure to have the line migration_group next to the label:

So you can use the command to run the migration together, and the order of execution will depend on the migration dependencies:

I hope that you enjoyed our article.

Best regards,

At Studio.gd we love the Drupal ecosystem and it became very important to us to give back and participate.

Today we're proud to announce a new module that we hope will help you !

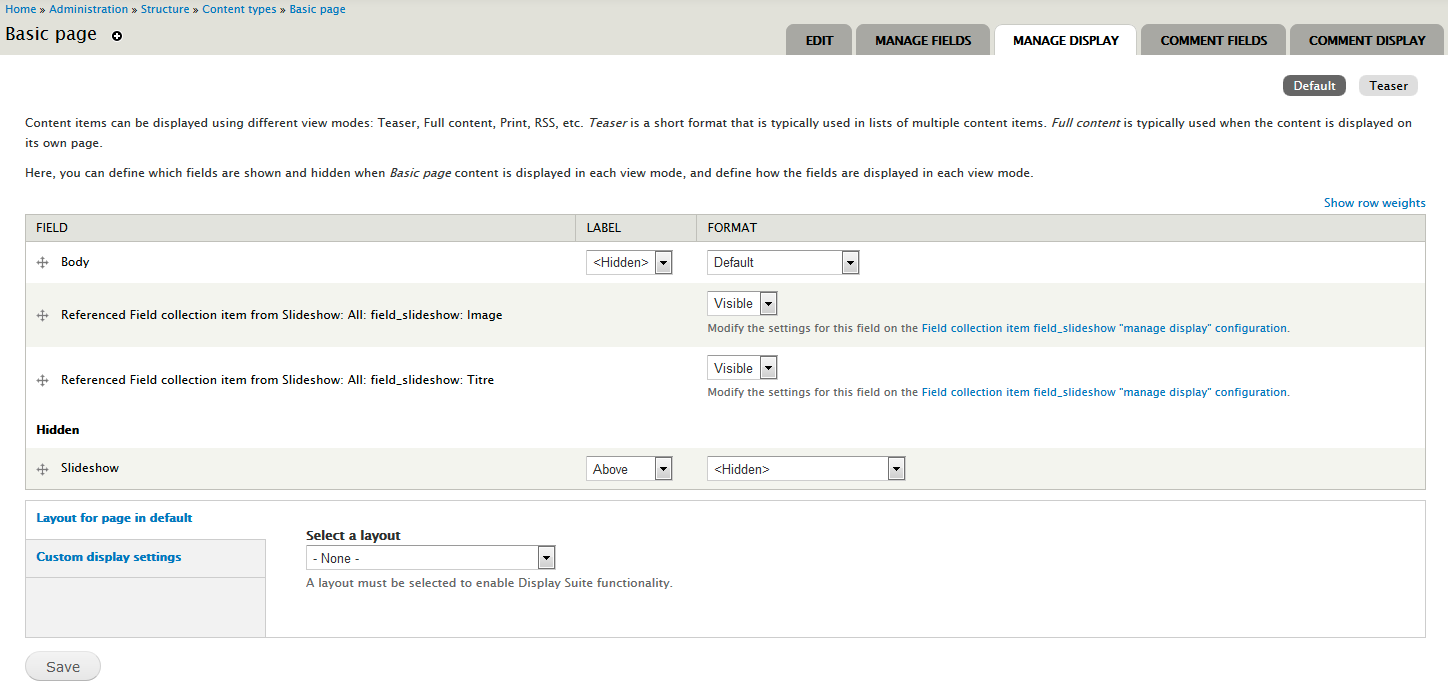

Inline Entity Display module will help you handle the display of referenced entity fields directly in the parent entity.

For exemple if you reference a taxomony "Tags" to an Article node, you will be able directly in the manage display of the article to display tags' fields. It can become very usefull with more complex referenced entity like field collection for exemple.

VOIR LE MODULE : https://www.drupal.org/project/inline_entity_display

Features

- You can control, for each compatible reference field instances, if the fields from the referenced entities would be available as extra fields. Disabled by default.

- You can manage the visibility of the referenced entities fields on the manage display form. Hidden by default.

- View modes are added to represent this context and manage custom display settings for the referenced entities fields in this context {entity_type}_{view_mode} Example: "Node: Teaser" is used to render referenced entities fields, when you reference an entity into a node, and you view this node as a teaser if there are no custom settings for this view mode, fields are rendered using the default view mode settings.

- Extra data attributes are added on the default fields markup, so the field of the same entity can be identified.

Compatible with Field group on manage display form.

Compatible with Display Suite layouts on manage display form.

Requirements

- Entity API

- One of the compatible reference fields module.

Tutorials

simplytest.me/project/inline_entity_display/7.x-1.x

The simplytest.me install of this module will come automatically with these modules: entity_reference, field_collection, field_group, display suite.

VOIR LE MODULE : https://www.drupal.org/project/inline_entity_display

We are currently developping a similar module for Drupal 8 but more powerful and more flexible, Stay tuned !

Today we are talking about Access Policy API, What it does, and How you can use it with guest Kristiaan Van den Eynde. We’ll also cover Visitors as our module of the week.

For show notes visit:

https://www.talkingDrupal.com/472

Topics

- What is the Access Policy API

- Why does Drupal need the Access Policy API

- How did Drupal handle access before

- How does the Access Policy API interact with roles

- Does a module exist that shows a UI

- What is the difference between Policy Based Access Control (PBAC), Attribute Based Access Control (ABAC) and Role Based Access Control (RBAC)

- How does Access Policy API work with PBAC, ABAC and RBAC

- Can you apply an access policy via a recipe

- Is there a roadmap

- What was it like going through pitchburg

- How can people get involved

Resources

Guests

Kristiaan Van den Eynde - kristiaanvandeneynde

Hosts

Nic Laflin - nLighteneddevelopment.com nicxvan

John Picozzi - epam.com johnpicozzi

Aubrey Sambor - star-shaped.org starshaped

MOTW

Correspondent

Martin Anderson-Clutz - mandclu.com mandclu

- Brief description:

- Have you ever wanted a Drupal-native solution for tracking website visitors and their behavior? There’s a module for that

- Module name/project name:

- Brief history

- How old: created in Mar 2009 by gashev, though recent releases are by Steven Ayers (bluegeek9)

- Versions available: 8.x-2.19, which works with Drupal 10 and 11

- Maintainership

- Actively maintained

- Security coverage

- Test coverage

- Documentation guide is available

- Number of open issues: 20 open issues, none of which are bugs against the 8.x branch

- Usage stats:

- Over 6,000 sites

- Module features and usage

- A benefit of using a Drupal-native solution is that you retain full ownership over your visitor data. Not sharing that data with third parties can be important for data protection regulations, as well as data privacy concerns.

- You also have a variety of reports you can access directly within the Drupal UI, including top pages, referrers, and more

- There is a submodule for geoip lookups using Maxmind, if you also want reporting on what region, country, or city your visitors hail from

- It provides drush commands to download a geoip database, and then update your data based on geoip lookups using that database

- It should be mentioned that the downside of using Drupal as your analytics solution is the potential performance impact and also a likely uptick in usage for hosts that charge based on the number of dynamic requests served

Headless websites have taken the industry by storm, promising to deliver unique brand experiences that enable customer loyalty. Using a headless approach for your project allows you to combine technologies that would normally be siloed due to language or server constraints.

Typically when we talk about a headless Drupal architecture, we are referring to using Drupal for its strength as a content management system (CMS), but using a framework like React or Vue to drive the frontend. This separation of concerns allows your teams to focus on using the tools they know best—ultimately delivering a better product.

The single most important metric in commerce implementations is response time. A website’s overall responsiveness can directly affect the conversion rate and the bottom line. According to a study by Porent in 2019, "The highest e-commerce conversion rates occur between 0 and 2 seconds, spanning an average of 8.11% e-commerce conversion rate at less than 1 second, down to a 2.20% e-commerce conversion rate at a 5 second load time." Let's explore why traditional commerce implementations are so slow and why headless might just be the solution.

Why Use Drupal as a Commerce Platform?

Before we consider the frontend, we need a robust, secure backend platform to deliver our data and business logic. One of the many reasons Drupal is a great candidate for headless, or really any CMS build, is its inherent flexibility and security. Drupal's fieldable entities mean you can structure your CMS to fit your data. Drupal is regularly screened for vulnerabilities and has a robust process to identify and fix security issues. This is especially important in commerce implementations where proprietary data is often pulled from a Product Information Management (PIM) system like Akeneo.

Drupal's true power comes in the form of a massive library of community-contributed and maintained modules. A great example of this is the Drupal Commerce suite maintained by Centarro. Drupal Commerce out-of-the-box provides a robust set of entities and plugins that provide a complete commerce experience. Commerce can be further customized by Contrib modules that provide everything from payment processors (like Stripe or Paypal) to shipping integrations (like UPS or FedEx). Community-contributed modules are the cornerstone of the Drupal platform, and the projects we build make them possible.

Customizing Your Drupal Commerce Forms

Why Is Traditional Commerce Slow?

In a traditional Magento or Drupal Commerce implementation, we often create frontend markup on the backend before delivering the page to the user. As we generate this markup, we make calls to various APIs like shipping rate calculators. Once we have this complete HTML document, we send it to the browser. The browser then parses this markup and scans it for additional documents like CSS, Javascript, and images. Once it gathers all of this data, it turns it into an interactable web page. All of these things make the average page load speed roughly 7 seconds on desktop. That's quite the gap between our target of <2 seconds.

To alleviate the work the backend has to do to render a page, we've come up with some pretty clever tricks. One example is using Edge Side Includes (ESIs). ESIs work by loading the majority of page content from cache, then replacing specific placeholders with dynamic calls to the server. Since the server doesn't need to render the complete markup, we can often achieve faster load times. Drupal Core offers BigPipe, a module that similarly renders the majority of a page from cache, then replaces placeholders with dynamic content. Oftentimes these solutions come at a high complexity and frequently cause problems related to caching. They also don't work for content that is highly dynamic like category pages with facets and filters.

How Does Headless Help?

When we implement a headless website, we can think of the frontend as less like a web page and more as an application. A properly designed React (or other JS frontend framework) app can be lightweight and heavily cacheable. On initial page load, we load our entire application into working memory. This means that as a user navigates through the site, they are actually interacting with a single-page application that does not require a page reload to show new content.

The reason we can get away with not reloading the page is that data can be asynchronously fed to the frontend. This means that as a user is browsing the site we can preload resources like images and linked pages. When we can run expensive operations independently of a user's browsing experience, we can make website response times appear to be instantaneous—or more within our targeted 0-2 second range.

How Do We Get There?

On the backend, you still need a robust, secure CMS to feed data to the frontend and handle complex or session actions (add to cart/checkout validation). This is where Drupal is an easy choice. One of the easiest ways to feed data to a frontend is via JSON data. Drupal Core provides the JSON:API module which allows you to easily expose your content as filterable JSON objects. This means you can leverage the strength of the Drupal Community while giving your frontend room to prefetch and asynchronously validate data.

Building a world-class commerce website necessitates a world-class toolset, but even more so a world-class team. Drupal has proven to be a reliable CMS capable of delivering highly custom experiences. When this is paired with a well-built frontend, load times become instantaneous, and conversion rates increase!

Well, that was exciting! Releasing an enterprise-level Drupal Commerce solution into the wild is a great opportunity to take a moment to reflect: How on earth did we pull that off? This client had multiple stores, multiple product offerings, each with its own requirements for shopping, ordering, payment, and fulfillment flow. And some pretty specific ideas about how the User Experience (UX) was to unfold.

Drupal Commerce offers many possible avenues into the world of customization; here are a few we followed.

Can't I Just Config My Way Out of This?

Yes! But no, probably not. Yes, you should absolutely set up a Proof-of-Concept build using just the tools and configurations at your disposal in the admin user interface (UI). How close did you get? Does your implementation need just a couple of custom fields and a theming, or will it need a ground-up approach? This will help you make more informed estimations of the level of effort and number of story points.

Bundle Up

The Drupal Commerce ecosystem, much like Drupal as a whole, is populated by Entities—fieldable and categorizable into types, or bundles. Think about your particular situation and make use of these categorizations if you can.

Separate your physical and digital products, or your hard goods and textiles. Distinct bundles give you independent fieldsets that you can group with view_displays.

Order Types (admin/commerce/config/order-types/default/edit/fields) are the main organizing principle here: if you have a category of unpaid reservations vs. fully paid orders—that sounds like two separate order_types and two separate checkout flows. Softgoods and hardgoods are tracked for fulfillment in two separate third-party systems? Separate bundles. Keep in mind, though, that a Drupal order is an entity and is a single bundle. An order can have multiple order_item types, but only a single order_type.

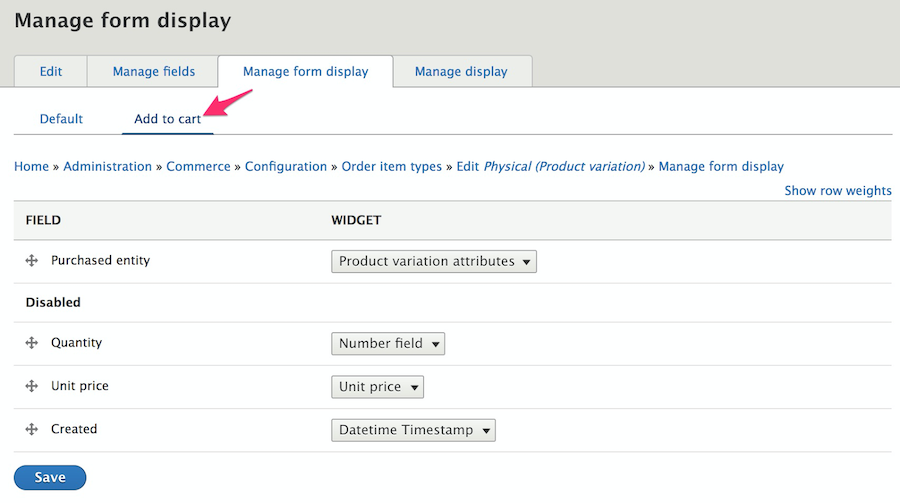

Order Item Types (admin/commerce/config/order-item-types/default/edit/fields) bridge the gap between products and orders. Order Item bundles include Purchased Entity, Quantity, and Unit Price by default, but different product categories may need different extra fields on the Add to Cart form.

Adding to Cart

Drupal Commerce offers a path to add Add-to-Cart forms to Product views through the Admin UI.

You could alter the form through the field handler, the formatted, or template of course, but we wanted more direct control and flexibility. We created a route with parameters for product and variation IDs—now we could put the form in a modal and reach it from a CTA placed anywhere. The route's controller, given the product variation, other route parameters, and the page context, decided which order_item_type form to present in the modal.

class PurchasableTextileModalForm extends ModalFormBase {

use AjaxHelperTrait;

/**

* {@inheritdoc}

*/

public function buildForm(array $form, FormStateInterface $form_state, Product $product = NULL, ProductVariation $variation = NULL, $order_type = 'textile', $is_edit_form = FALSE) {

$form = parent::buildForm($form, $form_state, $product, $variation);

...We extended the form from FormBase, incorporated some custom Traits, and used \Drupal\commerce_cart\Form\AddToCartForm as a model. We learned some fun lessons on the way:

- Don't be shy when loading services—who knows what you'll wind up needing.

- Keep in mind that the

form_state'sorder_itemis not the same as thePurchasedEntity. Fields associated with an Order Type are assigned at theform_statelevel, fields on an Order Item bundle are properties of thePurchasedEntity. - Want to check your cart to see if this particular product variation is already a line-item?

\Drupal::service('commerce_cart.order_item_matcher')->match()is your friend. - When validating, recall again that

PurchasedEntityis an Entity, which means it uses the Entity Validation API. TheAvailabilityCheckercomes for free, you may add custom ones simply by registering them inyour_module.services.yml. Or you may want to create a custom Constraint.

Our add-to-cart modal forms (which we reused on the cart view page for editing existing line-items) turned out to be works of art. We had vanilla javascript calculating totals in real-time, we had a service calculating complex allocation data also in real-time, triggered by ajax. Custom widgets saved values to order_item fields which triggered custom Addon OrderProcessors.

class AddonOrderProcessor implements OrderProcessorInterface {

/**

* {@inheritdoc}

*/

public function process(OrderInterface $order) {

foreach ($order->getItems() as $order_item) {

...Recognizing how intricate and interconnected this functionality was going to be, we committed ourselves early on to the necessity of building the forms from scratch.

Wait, What Am I Getting?

The second step of the experience: seeing how full your cart has become after an exuberant shopping session.

Out-of-the-box, Commerce offers a View display at "/cart" of a user's every order item, grouped by order_type.

We wanted separate pages for each order_type, so first we overrode the routing established by commerce_cart and pointed to our own controller which took the order_type as a route parameter.

class RouteSubscriber extends RouteSubscriberBase {

/**

* {@inheritdoc}

*/

protected function alterRoutes(RouteCollection $collection){

// Override "/cart" routing.

if ($route = $collection->get('commerce_cart.page')) {

$route->setDefaults(array(

'_controller' => ...That controller passed the order_type as the display_id argument to the commerce_cart_form view, where we had built out multiple displays.

We had a lot of information to show on the cart page that was not available to the View UI. We had the results of our custom allocation service that we wanted to show in a column with other Purchased Entity information. We had add-on fees we wanted to show in the line item's subtotal column. This stuff wasn't registered as fields associated with an entity in Drupal, these were custom calculations.

We registered custom field handlers that we could select in the Views UI, placing them into columns of the table display and styling them with custom field templates. The render function of these field plugins had access to all the values returned in its ResultRow by the view for our custom calculations:

$values->_relationship_entities['commerce_product_variation']->get('product_id')Let's Transact!

The checkout flow has little customization available off-the-shelf through admin pages. You can reorder the sections on the pages and the Shipping and Tax modules will automatically create panes and sections for you, but otherwise, you get what you get, unless you roll your own.

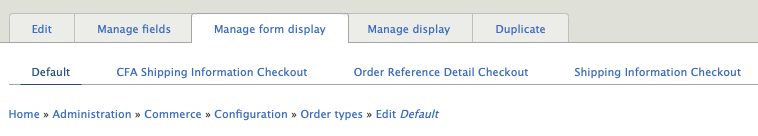

A custom Checkout Flow starts with a Plugin (so watch your Annotations!) which need not do too much more than define the array of steps. On the other hand, we extended the buildForm() and tucked in a fair amount of alterations, both globally and to specific checkout steps.

Each checkout step can have multiple panes (also plugins: @CommerceCheckoutPane) each with its own form -build, -validate, and -submit functions.

We built custom panes for each step, using shared Traits, extending and reusing existing functionality wherever we could. With a cache clear, our custom panes were available for ordering and placement in the Checkout flow UI.

We managed the order_type-specific fields and collected them in the field_displays tab in the admin UI. We could then easily call for those fields by form_mode in a buildPaneForm() function and render them. We used a similar technique in the validate and submit functions.

$form_display = EntityFormDisplay::collectRenderDisplay($this->order, 'order_reference_detail_checkout');

$form_display->extractFormValues($this->order, $pane_form, $form_state);

$form_display->validateFormValues($this->order, $pane_form, $form_state);Integration Station

This project had a half-dozen in-coming and out-going integration points with outside systems, including customer info, tax and shipping calculator services, the payment gateway, and an order processing service to which the completed order was finally submitted.

Each integration was a separate and idiosyncratic adventure; it would not be terribly enlightening to relate them here. But we are quite sure that, rather than having custom functionality shoe-horned here and there in a number of hook_alters spread over the whole codebase, keeping our checkout forms tidily in individual files and classes helped the development process immeasurably.

And Finally, Ka-ching

The commerce platform space is a landscape crowded with lumbering giants. It was awfully satisfying to see Team Drupal put together a great-looking, custom solution as robust as the big boys, in likely less time and certainly far more tightly integrated with the content, marketing, and SEO side of things. The depth and flexibility that make Drupal such a powerful platform for content management and presentation can also be used to deeply and efficiently customize all aspects of the shopping and checkout experience with Drupal Commerce.

Enterprise organizations are increasingly looking at Drupal as a reliable, open source option for developing their online presence—contributing and benefiting from the active community base and potentially taking advantage of cutting-edge, decoupled capabilities.

With over 10 years of Drupal experience and implementations ranging from small to complex, we are often asked to recommend the best approach for building a Drupal site. The answer, as with many questions is, it depends. For some clients, the best choice is to build a traditional, coupled Drupal website. For other clients, it makes sense to build a completely decoupled solution using Drupal as the backend. And for others, the best solution is somewhere in between. Many factors determine which is the best approach for a particular client and their situation.

One important factor in deciding what approach to take is to understand the needs and skills of the people that will use and maintain the system. The two main users to consider are the content creators and others that will work in the system daily and the developers that will build and maintain the system.

View Webinar Recording: Building Enterprise Websites with Drupal: Unleash Your Full Potential

Considering the Content Manager

For the content creators and content managers that work directly in the content management system (CMS), having an easy-to-use content admin system is key. Drupal has increasingly focused on this experience and has provided many features with this in mind. With traditional Drupal, content editors can quickly create pages leveraging the drag-and-drop capabilities of Layout Builder. Inline editing allows content editors to make quick changes to the content without diving deep into the content admin UI. And, content preview is available to review before publishing the content to the website.

All of this is also possible in a decoupled solution, but the developers must build and maintain this functionality or cobble a solution together from existing technologies. If the project requirements already require changes to Drupal's out-of-the-box functionality, building from scratch may be easier.

Considering the Development Team

The development team's skills are also an important consideration. If you have a team that has deep technical knowledge of a technology (or a desire to develop that knowledge), that can have an impact on the recommended approach. For instance, if your team has never themed a Drupal site before, but has experience with React, using a decoupled approach would fit nicely with the team's skills.

Like any framework, it takes time to learn how to theme Drupal sites. If you have a small team that is spread thin or maybe you don't have a team, a coupled approach using Layout Builder or Acquia's Site Studio could give your content editing team the flexibility it needs without requiring much help from a development team.

Considering the Digital Strategy

The overall digital strategy is an important factor to consider as well. Will the platform support a single site or is this a key piece to a multisite, multi-brand digital platform? Is Drupal the only platform involved, or is Drupal a part of a broader digital experience platform (DXP) that includes CRMs, Commerce platforms, a CDP, and other platforms? Whether working with Acquia, the open digital experience platform built on top of Drupal, or connecting into other tools—Drupal is designed to make these connections easy.

Drupal is a great platform to integrate with other platforms. Many integrations are easy to implement by installing a community module. Drupal provides a robust migration system that makes pulling data into Drupal easy to do. Drupal also makes it easy to pull data out of Drupal using REST APIs or GraphQL.

If you are only building one website with the platform and it is primarily for marketing your organization, a traditional Drupal build probably makes sense. The more systems you are integrating and the more channels you want to use the content in, the more sense it makes to build using a decoupled approach.

Considering the Requirements

The requirements are another important factor to consider. Requirements help us define the solution. Just as important as the requirements are how flexible the requirements are. Drupal provides lots of functionality out of the box. When you add the availability of more than 40,000 free community-contributed modules, Drupal can meet many requirements with very little effort.

As you define the requirements, you should compare them to what Drupal can do. And where there is a module that meets most, but not all, of the requirements, decide whether it is possible to change the requirements. The more Drupal satisfies the requirements that you would need to build yourself in a decoupled approach, the better the coupled Drupal approach makes sense. If you find that your requirements will require a lot of customization to Drupal, a progressively decoupled or even fully decoupled approach may make sense.

Considering the Budget and Timeline

Every project has budget and timeline constraints. If you are on a tight timeline (and budget), building a coupled Drupal platform is often a solid choice. Drupal provides so many out-of-the-box features that, with flexible requirements, you can build a website in a very short period of time. For instance, we were able to build a small Drupal site using Site Studio in just a few days. The more expansive your budget and timeline, the more options you have in approach.

The Versatility of Drupal

After you've done the analysis and come up with the best approach, understand that circumstances may change. And, regardless of the approach, because Drupal can handle any of the approaches in the spectrum, you can evolve your approach over time. Because Drupal has been built with an API-first approach, it allows you to change your approach from a coupled Drupal approach to a fully decoupled approach over time.

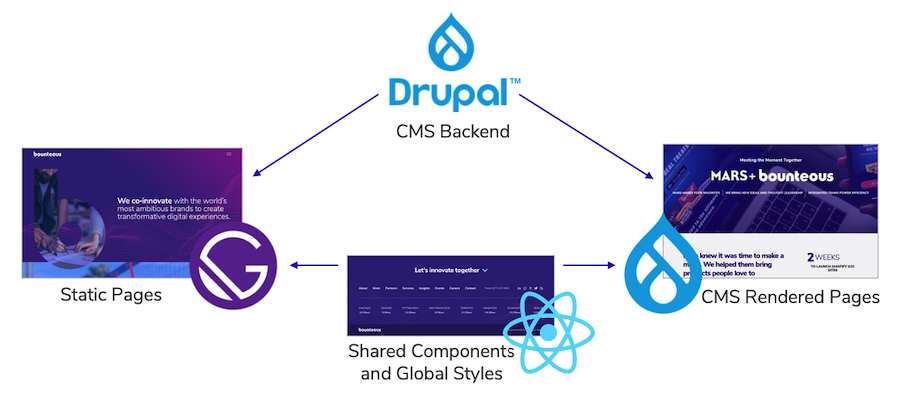

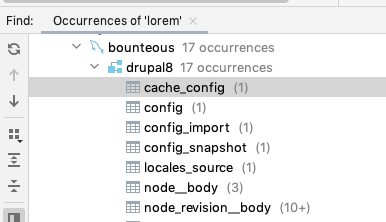

Here at Bounteous, our website was originally built with a coupled approach. However, we recently decided to refresh the site. Drupal has allowed us to decouple parts of the site that make sense to decouple but keep the other parts coupled. As needs dictate, we can continue to decouple only the parts that we need to.

Drupal is a versatile system that can be the centerpiece of your DXP. How you use it will depend on the factors above and others that you find important. Regardless of the approach you take, Drupal is versatile enough to change as your needs change in the future.

Contributing to Drupal is one of the most important things we can do as a part of the Drupal community. Considering that the platform is open source, contributions are essential to keep Drupal advancing. When it comes to contributions, there are a number of ways to get involved—and they don't all involve coding. I recently had the opportunity to contribute in the form of speaking at DrupalCon about a module our team rescued.

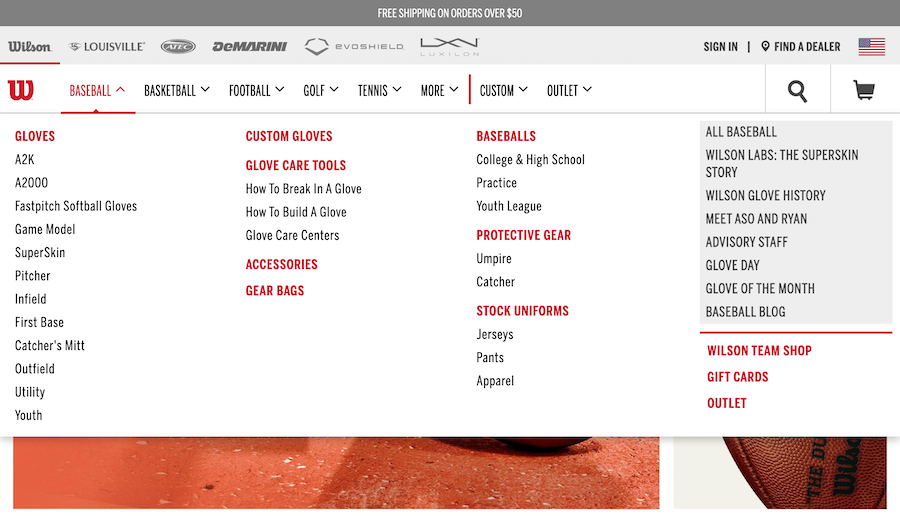

The Origins of Our DrupalCon Session

Our Drupal team has been working with the TB Mega Menu module since 2017. As we worked on various projects and tried to meet each client's different needs, we ended up making many updates and changes to the module. We eventually realized this module was no longer being maintained, so we applied for ownership and, ultimately, ended up rescuing the abandoned project.

We saw first-hand the community benefit that came from this project going from abandoned to rescued. Once we added our fixes and started updating the module, the community began using it again. Seeing the community jump right back in helped us to understand the value of contributing back to Drupal.

Encouraged by this new understanding of the importance of this contribution, we looked for a way to share that with the greater community. In a way, us sharing our story about contributing back to Drupal is another way to contribute to the Drupal community.

The Speaker Application Process

Since we wanted to share our experience of community contribution and demonstrate there are many different ways one can contribute, we decided to share our story at Drupal camps and DrupalCon. We first applied to Florida DrupalCamp and we did not get in. If something similar happens to you, it's important to not get discouraged. We took that "no" and let it drive us—we only worked harder when we applied to DrupalCon.

We spent a lot of time updating our proposal to DrupalCon. Our hard work and proposal revisions paid off, and we were rewarded with a "yes!" Some tips to keep in mind when working on your proposal.

Pick a Topic that Excites You

Pick a topic that you're excited about. If you're passionate about your topic, that will shine through in your proposal (and later on in your presentation). We were very excited about our topic and held it close to our hearts, which fueled our proposal development.

For our DrupalCon proposal, we took a step back and thought about how we could share this experience we were so passionate about, and how we could have our audience understand the importance of this contribution and get excited themselves.

Keep Your Proposal Direct and Concise

Make sure your proposal is direct and concise. It's always helpful to have other people take a look at your proposal and provide a fresh perspective. If you're able to, it's also beneficial to have someone with speaker proposal experience review.

Select a Catchy Title

Choose a title that's eye-catching and true to the content of your session. Of course, you want your title to create interest, but it's also important to make sure that your session's attendees are getting the content they expected when they chose to attend.

My Experience as a First-Time Speaker

Contributing to TB Mega Menu and presenting at DrupalCon were my first major experiences within the Drupal community. This year, DrupalCon was virtual, and it was a cool experience presenting online. As a first-time presenter, there were a few things I found comforting about presenting virtually. Personally, I felt less nervous because I didn't have to stand on stage and present to a crowd. I felt a bit more casual and comfortable in my own home. There was a chat and Q&A feature so I could see if the audience was engaged in my presentation. Overall, I enjoyed presenting virtually for my first speaker experience.

Co-presenting with my colleague Wade Stewart was another important element of this experience. I had never presented before at any conference, so having a co-presenter for my session helped to alleviate some of the nerves I experienced.

We did a lot of individual practice to get familiar with our own pieces of the story, and we also practiced frequently together to ensure we both felt comfortable and that we had a good flow. For anyone who is interested in speaking at a conference like DrupalCon but who might be hesitant or nervous to do it alone, I definitely recommend finding someone to co-present with. In my experience, it removed a lot of pressure and made the experience more fun.

Giving Back to the Drupal Community

It has been great as a relatively new member of both the Drupal community and Bounteous to be able to speak at DrupalCon and participate in TB Mega Menu. Both of these experiences have really helped me to appreciate and understand how important the community is around Drupal.

I am thankful that I was able to contribute via our module rescue and then contribute again in a non-code way by sharing the experience and speaking at DrupalCon. I encourage everyone to explore the ways that you can give back to the Drupal community! Contributors can earn credits for identifying or fixing problems, contributing code, or a host of other non-technical options like speaking at conferences.

In the latest version of Site Studio, Acquia has introduced a game-changing feature that is sure to challenge Drupal Core's Layout Builder as the premier go-to tool for site builders. Site Studio already has a superb component building and editing experience, but now users can add and edit components live on the page.

In this post, we'll go in-depth on this new feature, plus other recent updates to Site Studio.

Visual Page Builder in Acquia Site Studio

On previous iterations of Site Studio, users could edit existing components on the page live via the Page Editor, but the components had to already exist in the layout canvas field. This operated in a similar fashion to other Drupal page builder elements such as panels, layout builder, paragraphs, etc., and only is accessible through contextual links. If a user wanted to add a brand new component to the page, they had to add it via the node edit form. But now, all of that changes.

While the layout canvas is still accessible via the node edit form, content editors can completely assemble a page from the front end, providing an entirely new meaning to the layout canvas field concept. Other than page creation or administrative settings, content editors may have little need to open the node edit form when adding page content. Of course, this all depends on how your site's content types have been architected. Here is a brief tour of the new page builder experience:

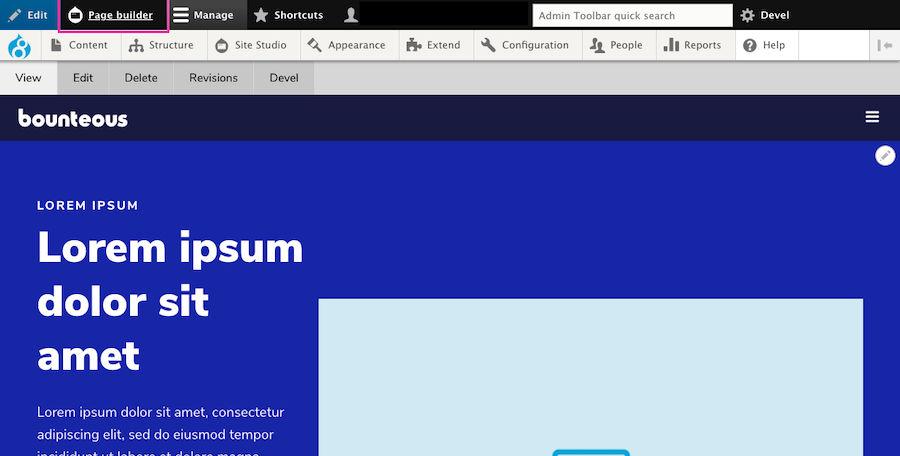

When a user is logged in and on a page that utilizes Site Studio and a layout canvas, they will see a new Page Builder button on the admin toolbar.

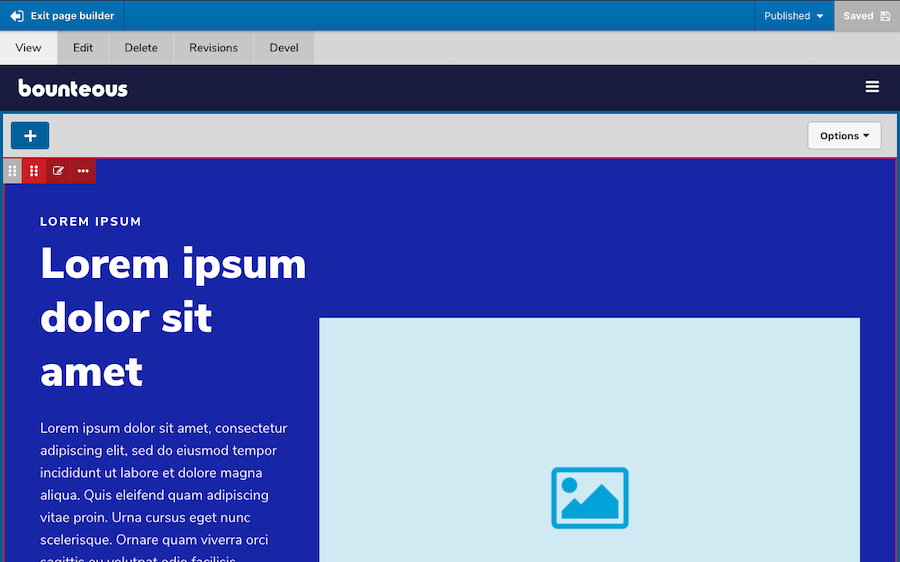

Enabling page builder mode will allow users to add, edit, move, delete, duplicate, and/or save as component content. Users can also save the entire page layout.

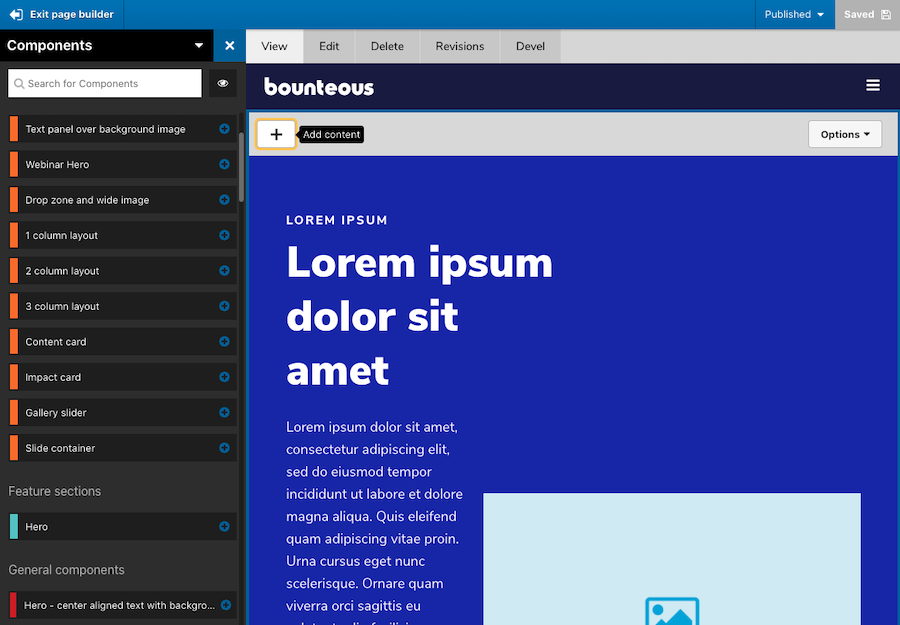

As great as this new experience is, it's also helpful to see consistency in how new components or elements can be added via the left side, off-canvas components drawer, making it seamless. Users don't have to re-learn how to add components, but rather get an improved page building experience.

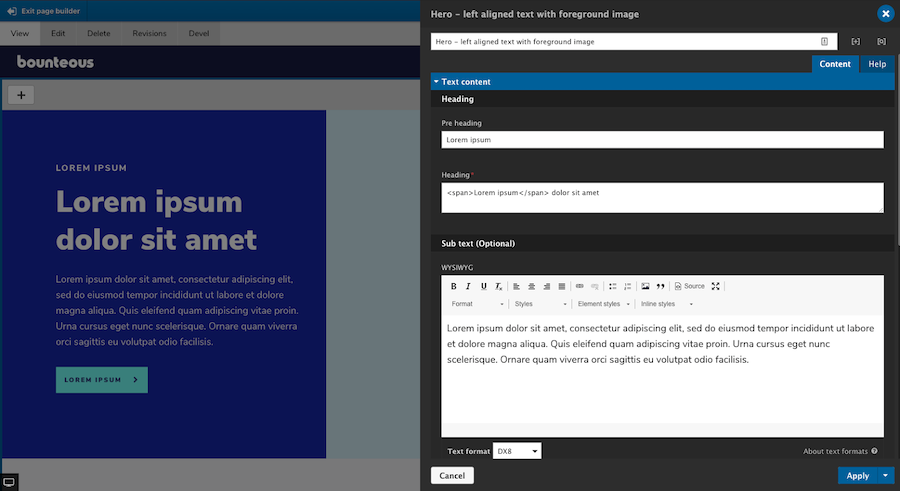

The component editor itself also behaves the same way whether users are using the page editor, visual page builder, or the layout canvas on the node edit form.

The visual page builder is included as a new submodule within Site Studio and has to be enabled before it can be used.

Pro Tip: Developers should also be aware that anytime you update Site Studio, enable new submodules, and/or create or alter components, it's important that you run the import and rebuild functions. This can be done from the Site Studio UI or via Drush commands. For additional information on how the visual page builder works, visit Acquia's Site Studio documentation.

Site Studio's new visual page builder provides a whole new meaning to "what you see is what you get." The page building experience for content editors has never been better or easier, and this new feature alone should be enough to convince you to use Site Studio on your next project.

Other Site Studio Highlights

While the addition of the Visual Page Builder is kind of a game-changer for Site Studio, the latest release also includes some other smaller but no less important features, including some accessibility enhancements, rel attribute support, and more.

Sync Batch Limit Overrides

On previous builds of Site Studio, admins were limited to importing 10 configuration items at a time via Site Studio Sync to reduce the amount of memory required. Acquia has now exposed a method allowing admins to override the default setting. By adding the following to a Drupal settings file, you can increase the number of configuration items that process per import batch:

$settings['sync_max_entity'] = 20;This is one of the few items of Site Studio that is controlled by a developer and must be updated in code. Users should also be aware that by increasing this value, more memory will be required and can lead to issues.

Rel Attribute Support

Acquia has also added support for the Rel attribute on the link element. This attribute defines the relationship between the linked resource and the current document. Previously, if users wanted to have Rel attribute options on links, they had to be added by a component builder. Now, when a link uses the type "URL" and the target is set to "New window," a group of checkboxes will automatically appear for the following options:

nofollow- prevents backlink endorsement, so that search engines don't pass page rank to the linked resource.noopener- prevents linked resources from getting partial access to the linking page, something that is otherwise exploitable by malicious websites.noreferrer- similar tonoopener(especially for older browsers), but also prevents the browser from sending the referring webpage's address.

The new Rel attribute can be found on the Link, container, slide item, and column elements. It should be noted for the SEO conscious, that the use of nofollow will stop search engines from passing page rank endorsements to the linked resource. This is often used in blog comments or forums, as these can be a source of spam or low-quality links.

Google and other search engines require nofollow to be added to sponsored links and advertisements. Additionally, the use of the No referrer toggle can affect analytics because it will report traffic as direct instead of as referred.

Nolink Token Support

One under-the-radar update from Acquia is the ability to use the token on Site Studio menu templates. For any experienced site builders, you probably know about the ability to use the token on menu links to render them as a heading, etc., and without a link attached. It's a great way to add sub-level menu headings.

On previous builds of Site Studio, users were unable to use the token as it would still render as an anchor tag with an empty href. In 6.5, using will result in the menu item rendering with a tag instead. Nothing needs to be done to start using the token, though, your menu styles may need to be updated to account for the usage of tags. Also to note, if a different HTML element has been specified in your Menu Template, that setting will take priority.

Accordion Accessibility Enhancements

Accessibility is a moving target. Keeping a site up-to-date with accessibility enhancements is one of the more important responsibilities we have and Site Studio is no exception.

In this version, Acquia has added some accessibility improvements to the Accordion element for the end-user. The header links will now have an aria-expanded attribute, which toggles between true and false when expanded and collapsed, respectively.